Scala commands for Spark

the Scala commands for Spark, welcome to the world of Apache Spark Basic Scala Commands. Are you the one who is looking forward to knowing the scala commands for spark List which are useful for Spark developers? Or the one who is very keen to explore the list of all the basic scala commands in Apache Spark with examples that are available? Then you’ve landed on the Right path which provides the standard and Apache Spark Basic scala Commands.

If you are the one who is keen to learn the technology then learn the advanced certification course from the best Apache Spark training institute who can help guide you about the course from the 0 Level to Advanced level. So don’t just dream to become the certified Pro Developer achieve it by choosing the best World classes Apache Spark training institute in Bangalore which consists of World-class Trainers.

We, Prwatech listed some of the top Apache Spark basic Scala Commands which Every Spark Developer should know about. So follow the Below Mentioned scala commands for spark and Learn the Advanced Apache Spark course from the best Spark Trainer like a Pro.

Apache Spark Basic Scala Commands

Expressions are computable statements

scala> 12 * 10

res0: Int = 120

You can output results of expressions using println

Or (what You want to print you can print using println)

Ex:-println(“Hello Prwatech”)

scala> println(“Hello,” + “Prwatech”)

Values

You can name the results of expressions with the value keyword.

Here such as ‘x’ is value “Referencing a value doesn’t re-compute it”

Variable

Variables are like values, except you can re-assign them. You can define a variable with the var keyword.

varx=6 + 4

x=10

uBlocks

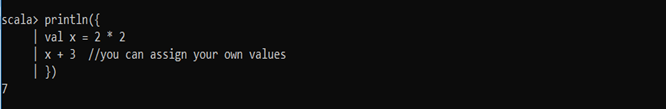

You can combine expressions by surrounding them with {}. We call this a block.

The result of the last expression in the block is the result of the overall block too.

Functions

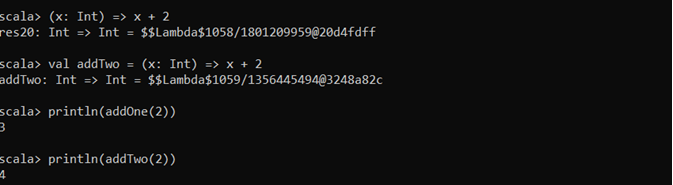

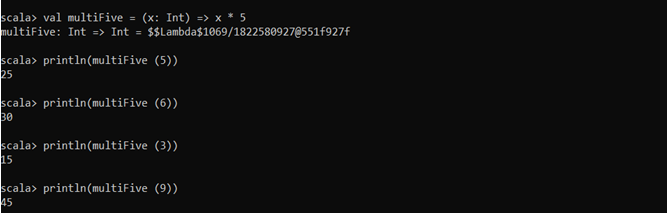

Functions are expressions that take parameters.

You can define an anonymous function (i.e. no name) that returns a given integer plus two

On the left of =,> is a list of parameters. On the right is an expression involving the parameters.

You can also name functions.

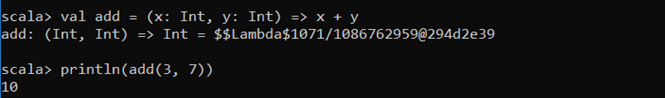

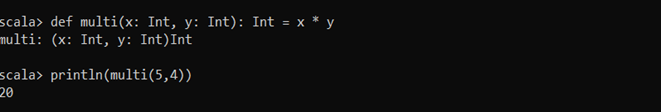

Functions may take multiple parameters.

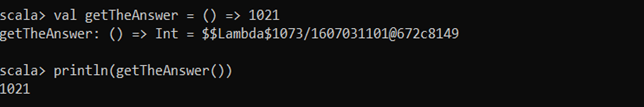

Or it can take no parameters.

Methods

The Methods look and behave very similar to functions, but there are a few key differences between them

Methods are defined with the def keyword.

Notice how the return type is declared after the parameter list and a colon: Int.

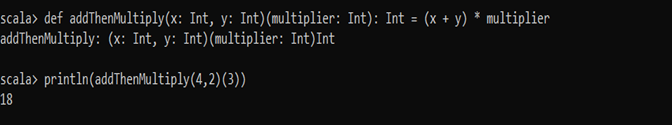

Methods can take multiple parameter lists.

Classes

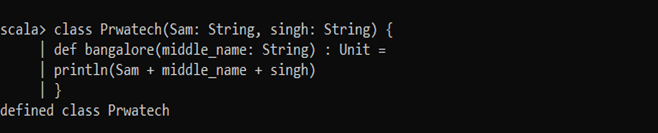

You can define classes with the class keyword followed by its name and constructor parameters.

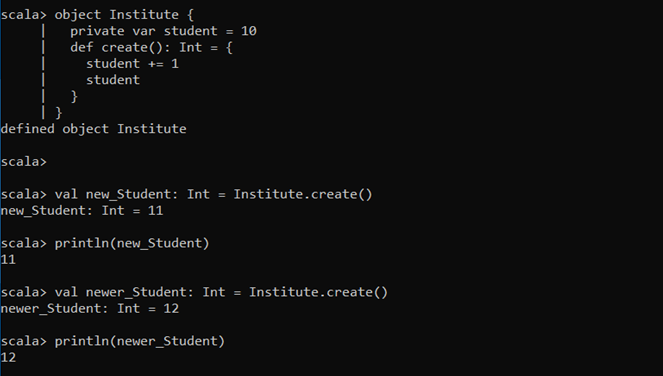

Objects

Objects are single instances of their own definitions. You can think of them as singletons of their own classes.

You can define objects with the object keyword.

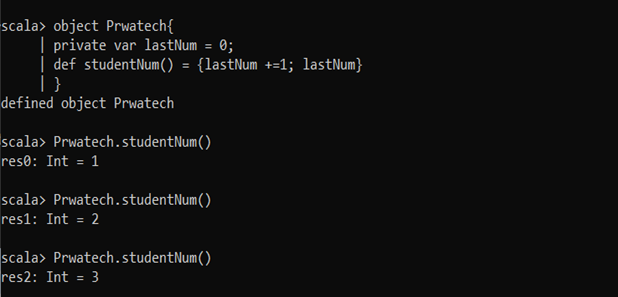

SINGLETON OBJECT

Scala classes do not have a static keyword, so they have Singleton Object.

The constructor of the singleton object is executed when the object is first used.

An object has all the features of the class.

The parameters cannot be provided to the constructor.

They can be imported from anywhere in the program.

Singleton Objects are used in the following cases:

When a singleton instance is required for co-ordinating a service.

#When a single immutable instance could be shared for efficiency purposes.

When an immutable instance is required for utility functions or constants.

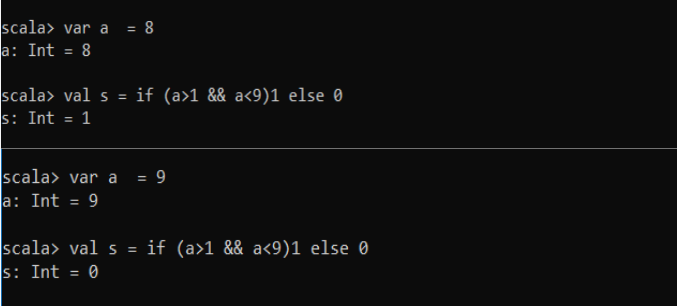

If else Condition

Command:-var a = 8

val s = if ( a>1 &&a<9)1else 0

Command:-var a = 9

val s = if ( a>1 && a<9)1else 0

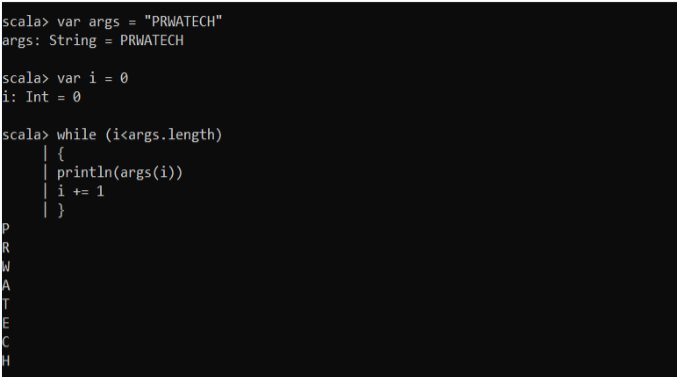

While Loop

Command:-var args = “PRWATECH”

var i = 0

while (i<args.length)

{

println(args(i))

i +=1

}

Foreach Loop

Command:-var args = “SCALA”

args.foreach(println)

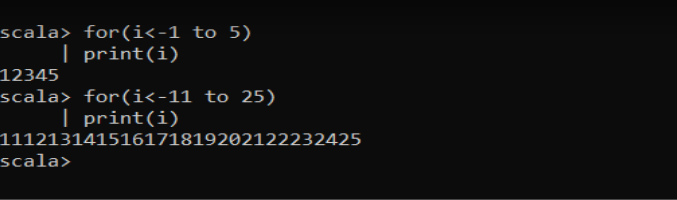

For Loop

Command:-for(i<1 to 5)

print(i)

Command:- for(i<11 to 25)

print(i)

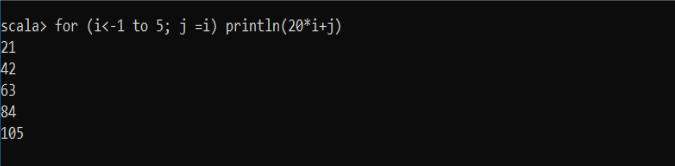

Nested for loop

Command:-for (i<-1 to 5; j =i) println(20*i+j)

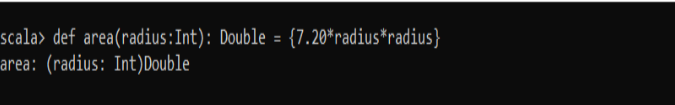

How to write a function

First define the function and then call it with the value

Command:-def area(radius:Int):Double= {7.20*radius*radius}

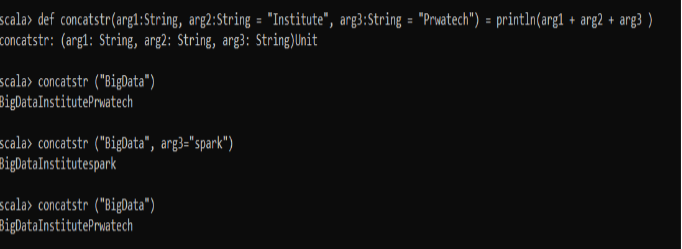

Argument to function

Command:-def concatstr(arg1:String,arg2:String = “Institute”,arg3:String = “Prwatech”) = println(arg1 + arg2 +arg3)

– concatstr(“BigData”)

– concatstr(“BigData”, arg3=”spark”)

– concatstr(“BigData”)

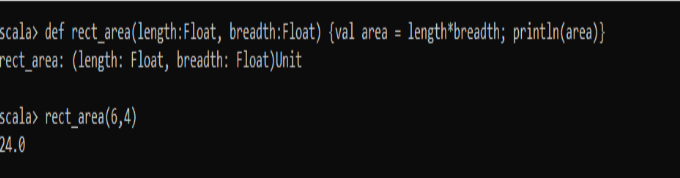

Scala Procedure

Scala has a special function that doesn’t return any value.

If there is a scala function without a preceding “=” symbol, then the return type of the function is unit

Such a function is called procedures.

Procedures don’t return any value in scala

Commands:-def rect_area(length:Float, breadth:Float) {val area = length*breadth; println(area)}

Scala

Collection

Scala has a rich library of collection, they are:-

Array

ArrayBuffers

Maps

Tuples

Lists

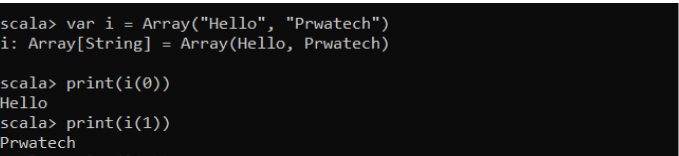

Array

It is the collection of elements or records of same type

Command:-var i = Array(“Hello”, “Prwatech”)

print(i(0))

print(i(1))

Array Buffers

Commands for initializing ArrayBuffers: import scala.collection.mutable.ArrayBuffer