Your Learning Manager Gets in Touch with You

Share your learning objectives and get oriented with our web and mobile platform. Talk to your personal learning manager to clarify your doubts.

Live Interactive Online Session with Your Instructor

Live screensharing, step-by-step live demonstrations and live Q&A led by industry experts. Missed a class? Not an issue. We record the classes and upload them to your LMS.

Access our Extensive Learning Repository

We have pre-populated your learning platform with previous class recordings and presentations. You will have life time access to Learning Repository.

Solve an Industry Live Use Case

Projects developed by industry experts gives you the experience of solving real-world problems you will face in the corporate world

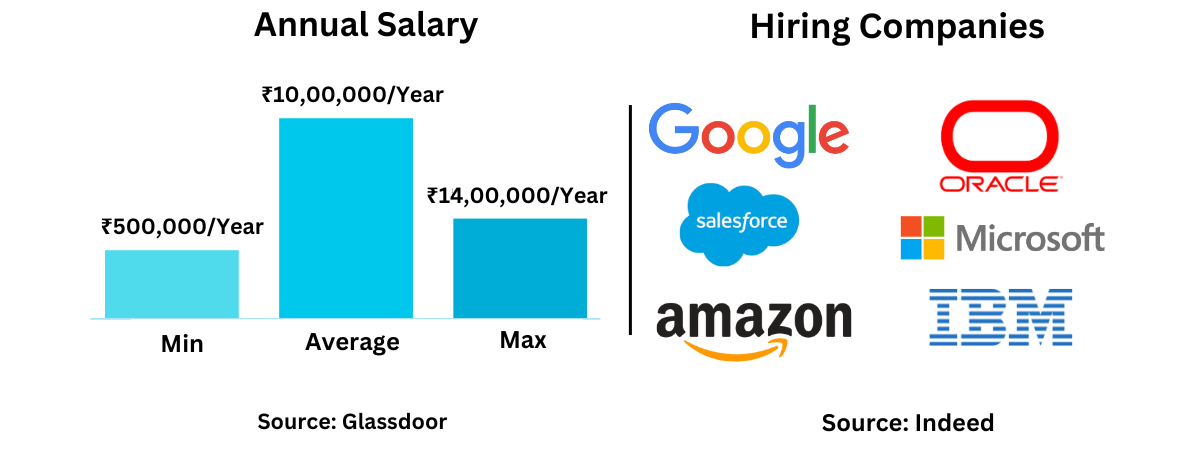

Get Certified and Fast Track Your Career Growth

Earn a valued certificate. Get help in creation of a professionally written CV & Guidance for interview preparation & questions