Hadoop Training Institutes in Pune: We’re the leading organization for best Hadoop Training in Pune providing World-class Advanced course with our Advanced Learning Management system creating expert manpower pool to facilitate global industry requirements. Today, Prwatech has grown to be one of the leading hadoop training and placement in pune talent development companies in the world offering learning solutions to Institutions, Corporate Clients and Individuals.

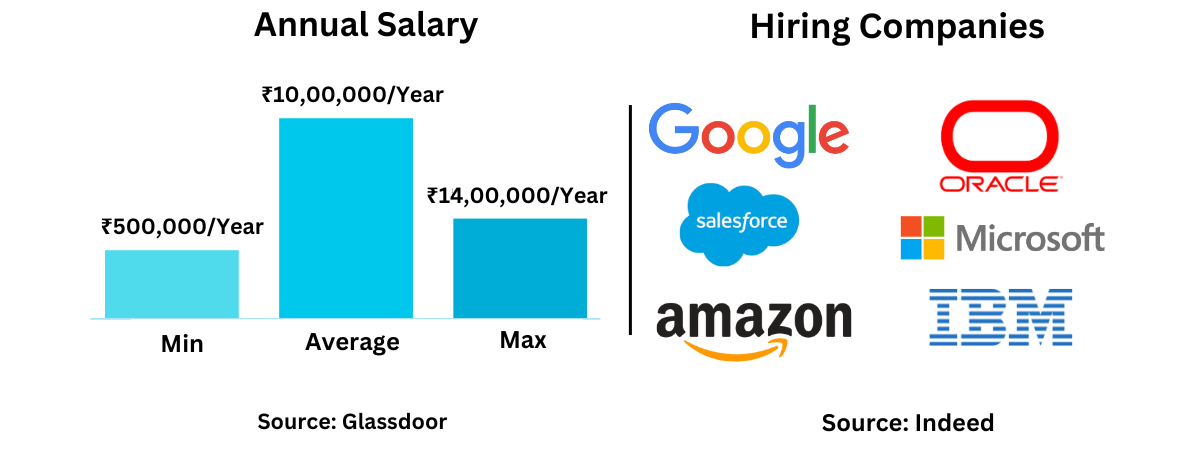

Prwatech, Offering the best hadoop training and placement in pune will train you towards global certifications by Hortonworks, Cloudera, etc. Our Best Hadoop Training in Pune will be especially useful for software professionals and engineers with a programming background. PrwaTech offers Hadoop Training in Pune with a choice of multiple training locations across Pune. We have the best in the industry certified Experienced Professionals who can guide you Learning Technology from the Beginner to advanced level with our Hadoop training institutes in Pune. Get Pro certification course under 20+ Years of Experienced Professionals with 100% Placement assurance.

Our Hadoop Training Institutes in Pune is equipped with exceptional infrastructure and labs. For best hadoop training in pune with placement come and enroll in any one of these PrwaTech Training centers.

Pre-requisites for Hadoop Training in Pune

- Basic knowledge of core Java.

- Basic knowledge of Linux environment will be useful however it’s not essential.

Who Can Enroll at Hadoop Training Center in Pune?

- This course is designed for those who:

- Want to build big data projects using Hadoop and Hadoop Ecosystem components.

- Want to develop Map Reduce programs.

- Want to handle the huge amount of data.

- Have a programming background and wish to take their career to the next level.

Why is Hadoop used for Big Data analytics?

Hadoop is changing the perception of handling Big Data especially unstructured data. Let’s know how the Apache Hadoop software library, which is a framework, plays a vital role in handling Big Data. Apache Hadoop enables surplus data to be streamlined for any distributed processing system across clusters of computers using simple programming models.

It truly is made to scale up from single servers to a large number of machines, each and every offering local computation, and storage space. Instead of depending on hardware to provide high-availability, the library itself is built to detect and handle breakdowns at the application layer, so providing an extremely available service along with a cluster of computers, as both versions might be vulnerable to failures.

HDFS is designed to run on commodity hardware. It stores large files typically in the range of gigabytes to terabytes across different machines. HDFS provides data awareness between task tracker and job tracker. The job tracker schedules map or reduces jobs to task trackers with awareness in the data location. This simplifies the process of data management. The two main parts of Hadoop are the data processing framework and HDFS.

HDFS is a rack aware file system to handle data effectively. HDFS implements a single-writer, multiple-reader model and supports operations to read, write, and delete files, and operations to create and delete directories.